How Culture Shapes What Counts as "Real Music" in the AI Era (and why we're all kinda lost rn)

SHARE ➩

COPY LINK

AI SUMMARY

Culture shapes what we call “real music"—rooted in human intention, lived experience, and emotional connection. As AI-generated tracks flood the charts, those ideas of authenticity are being challenged, and we’re left renegotiating what “real” even means.

Culture shapes what we call “real music"—rooted in human intention, lived experience, and emotional connection. As AI-generated tracks flood the charts, those ideas of authenticity are being challenged, and we’re left renegotiating what “real” even means.

In every aspect of life, culture shapes our understanding of what is deemed authentic, valuable, and essentially real. In music, culture is authenticated or considered “real” by linking it to lived experiences, human intention, and the emotional connection of what is known as “musicking.” The shared experiences of empathy between a performer and a listener are both human. At the same time, AI, or artificial intelligence, is just as it sounds. A simulation of human intelligence derived from a computer. AI lacks the subjective awareness of itself and its environment, a concept better known to humans as consciousness. AI doesn’t possess genuine emotions or lived trauma, which means it is unable to connect with people in a meaningful way. This is the very reason why AI-generated music feels soulless and empty to listeners.

As AI music floods the charts, the cultural definition of authenticity and what counts as “real music” is being challenged and redefined. Here are some ways that culture shapes these beliefs and the receipts backing it up!

How Culture Defines What's "Real" (and what's 🧢)

One of the most obvious signs that people care more about who made the music over how it sounds can be seen through the “Indistinguishability Paradox.” In a 2025 Deezer/Ipsos survey, 97 percent of listeners failed a blind test to tell apart a fully AI-generated music track from tracks made by humans. However, when participants were told that the track was AI-generated, most of them were left feeling “uncomfortable” and reduced its worth and importance. This suggests that although people cannot distinguish AI-generated music from human-made music, they place greater cultural value on human-made music. Essentially, what matters is who made it and not how it sounds. This explains why 80 percent of survey participants want clear AI labels on music tracks generated by artificial intelligence. Inherently, music lovers want to know the source of a track so they can align cultural beliefs with what they expect to be valued, even when they are unable to hear the difference. A Pew Research Study confirms this bias, revealing that the younger generation (those under 30) is the most critical of AI-generated music, with 53 percent saying they would prefer a song less if it was generated by AI. Proving that the cultural demands a human source and doesn’t care about a flawless sound.

As a matter of fact, the culture really values the controlled imperfection in music creation. It is more prevalent today, but the concept of controlled imperfection was heavily popularized by producer J Dilla. By turning off quantization, which automatically aligns notes to a perfect grid, subverting drum machines to create an offbeat, unmistakable rhythm that can only be done by a human. This type of unquantized, subverted drum method can be seen in the work of producers Kaytranada, Monte Booker, and Knxwledge in 2026. This is one of the main reasons people reject AI music: it is predictable, shy of taking creative risks, and formulaic.

Furthermore, the culture requires that human effort is used as a marker of value and authenticity. Traditionally, in music culture, people value the “struggle narrative,” better known as putting in the hours of years of practice, taking your Ls, and slowly mastering your craft over time. Some of our favorite artists had their “struggle narrative,” including: Kendrick Lamar, The Beatles, Nina Simone, Billie Holiday, Prince, Stevie Wonder, Aretha Franklin, SZA, Michael Jackson, Beyonce, Jay-Z, Bruce Springsteen, Eminem, and so many more. Essentially, AI has completely eliminated the learning phase of being bad to be good. This completely goes against the cultural belief that real music derives from years of both practice and mistakes. A perfect example is Ghostwriter977's AI-generated song featuring Drake and the Weeknd, “Heart on My Sleeve,” which sparked intense backlash across the music ecosystem. Drake even posted “this the final straw AI,” on his IG story.

Don’t get me wrong, the outrage spans from artists, fans, record labels, music critics, the Human Artistry campaign, and legal experts, but the main reason is that people perceived the track as worthless because it ignores the years of artist development that real human artists have to endure. Notably, SZA stressed this by asserting that she felt “at war” with AI, denouncing the tech for manufacturing "weird, stereotypical struggle music" that imitates Black artists and music, “I’m up against anti-intellectualism and doing things easy. The type of blend of information my human experience provides, AI can’t even be prompted to fuck with.”

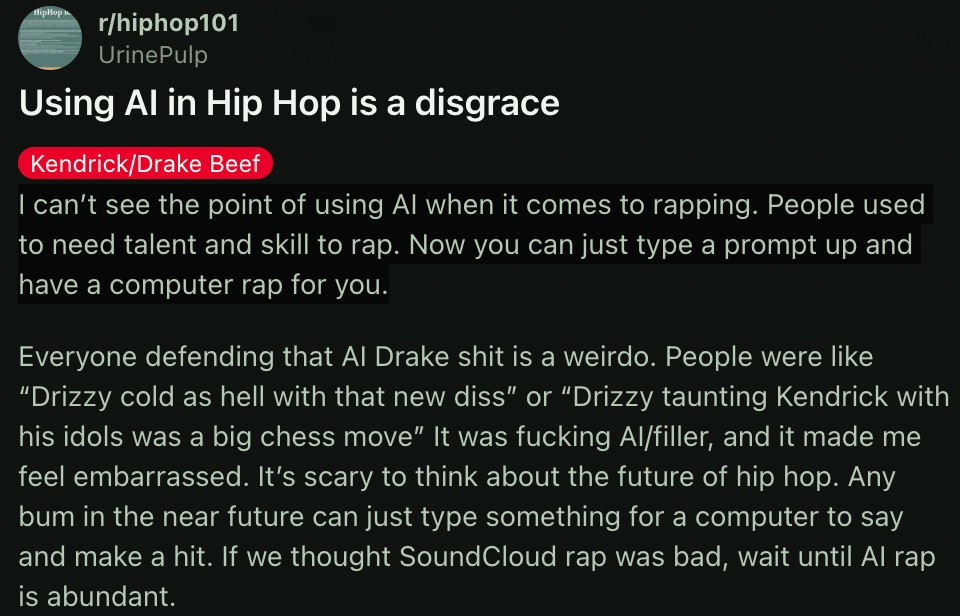

Even though AI-music is becoming more prominent, the cultural rules of authenticity prohibit the use of cloning deceased legends and is considered disrespectful, af. In April of 2024, during the notorious Drake and Kendrick Lamar beef, Drake cloned the voices of Tupac Shakur and Snoop Dogg for his AI diss track Taylor Made Freestyle. The culture strongly opposed it,

"Any bum in the near future can just type something for a computer to say and make a hit. If we thought SoundCloud rap was bad, wait until AI rap is abundant.”

Reddit user UrinePulp sums up several key concerns outlined in this report. The line, “any bum can just type something for a computer to say,” outlines the point about AI eliminating the struggle narrative or the being bad to be good phase. Furthermore, the mention of embarrassment and concern about “the future of hip hop” tells us that people value the tradition of lyrical skill and authenticity in hip hop/rap. This is a cultural standard that AI-music intrinsically weakens by skirting pass genuine lived experience and artistic development. Many fans, like UrinePulp, viewed this stunt as creatively distasteful and as “cultural imperialism”. For the most part, fans viewed this as Drake improperly using someone else’s culture and insulting the legacy of Tupac and his backing of real social issues.

The Cultural Double Standard in AI Music

The same mental process that leads people to take rap lyrics at face value while viewing country lyrics as storytelling also influences how we evaluate AI-generated music. The Johnny Cash Bias is a phenomenon in which the same lyrical content is interpreted differently depending on the genre to which it is asociated. A 1999 and 2017 study revealed that people “deemed identical lyrics more literal, offensive, and in greater need of regulation when they were characterized as rap compared to country.” This is a clear indication that people do not judge AI-music impartially, or for what it truly is, technology. In reality, it is judged through cultural bias and the expectations we have for different genres of music. Not to mention, there is a clear cultural boundary between what is viewed as acceptable assistance and what is seen as a threat to entire livelihoods. A study produced by Ari’s Take found that 87 percent of producers already use AI tools in music creation. But only 13 percent use it to generate an entire track. This implies that, in the production kitchen, AI being used as a sous chef focused on audio, code, or video is acceptable. But not for the chef, or executive producer. Tracks that are completely produced by AI threaten the traditional understanding of who created the music and what gives it authenticity.

Why Capitalism Has Us All Defending "Real" Music

Economic forces play a powerful role in shaping what we believe to be real or valuable, even though most cultural beliefs are rooted in artistic value and emotional connection.

Scarcity vs unlimited supply: Before AI music was a thing, scarcity held a sense of value. Only those with the talent or resources could produce high-quality music. Now, AI is flooding the market, with around 10 percent of music creators using generative AI, directly endangering the competitive advantage held by established artists and the economic model built on exclusivity as a whole.

The analog comeback: vinyl, live shows, and slow listening. Right now, there is a counter-revolution against the physical as streaming platforms like Deezer report that over 50,000 entirely AI-generated tracks are being uploaded daily. Those who are tired of subscriptions are driving the comeback of cassettes, CDs, and vinyl, enabling music consumption in a more focused, meaningful way (by eliminating algorithmic playlists

The irreplaceable human experience of concerts: Even though AI is making it easier to produce recorded music, the culture overwhelmingly values live performances. Simply because they are authentic, irreplaceable experiences, this can be measured through:

The Weeknd’s: After Hours 'Til Dawn tour, grossing $1 billion,

Taylor Swift’s: The Eras Tour, grossing over $2 billion,

NBA YoungBoy’s: Make America Slime Again (MASA) Tour, grossing $70 million.

This proves that people will still pay a premium to see a live human performance, even as AI has made music algorithmically replicable. Moreover, live concerts are only becoming more popular and remain the best way for artists and fans to truly connect.

Why Our Beliefs About AI Music Actually Make Sense

If scientists could prove that our brains respond differently to human-made music vs. AI-generated music (even if they sound the same), this could explain why people prefer man-made music. But there is a slight problem with this theory: a Deezer study found that 97% of respondents failed a blind test trying to distinguish between fully AI-generated music and man-made music. Informing us that human ears can’t tell the difference between human and AI tracks, challenging the idea that it is innate for us to “feel” when music is authentic. Furthermore, the culture values music because of its emotion, human experience, and unique perspectives. This is because no artificial intelligence has ever lived in reality. It cannot genuinely express happiness, sadness, grief, or joy. AI can’t even have a discourse about social issues. Any emotion it shows is exactly what is known for being, artificial. However, if someone uses AI to express their true emotions, like using Grimes's AI voice to vocalize what they might be too scared to do themselves, does this make it real again? Does Grimes's ethical consent in cloning her voice for a royalty split culturally validate this move?

Moreover, we can argue that even with AI’s bad rep, the culture does accept and validate AI when it is used as a transparent, collaborative tool that expands human creativity and intention, rather than completely bypassing or replacing human artistry. This is most noticeable in Kendrick Lamar’s The Heart Part 5 music video. Here, Lamar used deepfake technology to morph into Black figures such as Kobe Bryant, Will Smith, Kanye West, and Nipsey Hussle. The culture loved this! Lauren London called it “powerful art.” Because Lamar first received the blessings of their estates and then used the AI tech to show radical empathy and collective trauma, revealing a deep understanding of what real pain looks like, the culture deemed it highly authentic.

Lastly, if AI effectively replaces human musicians and threatens to eliminate their entire existence, this will lead to a concentration of profits among tech companies rather than artists. There is a somewhat dual crisis threatening the livelihoods of these human creators. Online, the hasty approval of AI in music has created a new danger for human artists because thousands of synthetic tracks are uploaded daily, taking away from their share of royalty revenue. Offline, it is extremely difficult for independent venues to compete with unregulated ticketing platforms (Ticketmaster) and monopolies such as Live Nation.

This seems to raise the fundamental question of “what’s the point of music anyway?” Is it for aesthetic pleasure, human connection, communication, or all of the above? There is not a single correct answer to what counts as authentic or valuable music. The answer is negotiated among the culture by evolving creativity, psychological biases, economic interests, and by what is deemed ethical. We can see this through the Ghostwriter phenomenon, the Johnny Cash bias, and AI detection tools that don’t work properly: authenticity is a retroactive decision based on who made it, what genre it falls into, and how well it sold. At this very moment, we are in a transitional period in which people haven’t yet agreed on the rules, and cultural norms haven’t been established. Some artists are monetizing, being praised, and detested for using artificial intelligence in the music world, all while legal systems struggle to keep up. To make a fair judgment in today’s volatile music market, it seems as though the culture needs to determine what is AI-acceptable vs. human-artistic.

In every aspect of life, culture shapes our understanding of what is deemed authentic, valuable, and essentially real. In music, culture is authenticated or considered “real” by linking it to lived experiences, human intention, and the emotional connection of what is known as “musicking.” The shared experiences of empathy between a performer and a listener are both human. At the same time, AI, or artificial intelligence, is just as it sounds. A simulation of human intelligence derived from a computer. AI lacks the subjective awareness of itself and its environment, a concept better known to humans as consciousness. AI doesn’t possess genuine emotions or lived trauma, which means it is unable to connect with people in a meaningful way. This is the very reason why AI-generated music feels soulless and empty to listeners.

As AI music floods the charts, the cultural definition of authenticity and what counts as “real music” is being challenged and redefined. Here are some ways that culture shapes these beliefs and the receipts backing it up!

How Culture Defines What's "Real" (and what's 🧢)

One of the most obvious signs that people care more about who made the music over how it sounds can be seen through the “Indistinguishability Paradox.” In a 2025 Deezer/Ipsos survey, 97 percent of listeners failed a blind test to tell apart a fully AI-generated music track from tracks made by humans. However, when participants were told that the track was AI-generated, most of them were left feeling “uncomfortable” and reduced its worth and importance. This suggests that although people cannot distinguish AI-generated music from human-made music, they place greater cultural value on human-made music. Essentially, what matters is who made it and not how it sounds. This explains why 80 percent of survey participants want clear AI labels on music tracks generated by artificial intelligence. Inherently, music lovers want to know the source of a track so they can align cultural beliefs with what they expect to be valued, even when they are unable to hear the difference. A Pew Research Study confirms this bias, revealing that the younger generation (those under 30) is the most critical of AI-generated music, with 53 percent saying they would prefer a song less if it was generated by AI. Proving that the cultural demands a human source and doesn’t care about a flawless sound.

As a matter of fact, the culture really values the controlled imperfection in music creation. It is more prevalent today, but the concept of controlled imperfection was heavily popularized by producer J Dilla. By turning off quantization, which automatically aligns notes to a perfect grid, subverting drum machines to create an offbeat, unmistakable rhythm that can only be done by a human. This type of unquantized, subverted drum method can be seen in the work of producers Kaytranada, Monte Booker, and Knxwledge in 2026. This is one of the main reasons people reject AI music: it is predictable, shy of taking creative risks, and formulaic.

Furthermore, the culture requires that human effort is used as a marker of value and authenticity. Traditionally, in music culture, people value the “struggle narrative,” better known as putting in the hours of years of practice, taking your Ls, and slowly mastering your craft over time. Some of our favorite artists had their “struggle narrative,” including: Kendrick Lamar, The Beatles, Nina Simone, Billie Holiday, Prince, Stevie Wonder, Aretha Franklin, SZA, Michael Jackson, Beyonce, Jay-Z, Bruce Springsteen, Eminem, and so many more. Essentially, AI has completely eliminated the learning phase of being bad to be good. This completely goes against the cultural belief that real music derives from years of both practice and mistakes. A perfect example is Ghostwriter977's AI-generated song featuring Drake and the Weeknd, “Heart on My Sleeve,” which sparked intense backlash across the music ecosystem. Drake even posted “this the final straw AI,” on his IG story.

Don’t get me wrong, the outrage spans from artists, fans, record labels, music critics, the Human Artistry campaign, and legal experts, but the main reason is that people perceived the track as worthless because it ignores the years of artist development that real human artists have to endure. Notably, SZA stressed this by asserting that she felt “at war” with AI, denouncing the tech for manufacturing "weird, stereotypical struggle music" that imitates Black artists and music, “I’m up against anti-intellectualism and doing things easy. The type of blend of information my human experience provides, AI can’t even be prompted to fuck with.”

Even though AI-music is becoming more prominent, the cultural rules of authenticity prohibit the use of cloning deceased legends and is considered disrespectful, af. In April of 2024, during the notorious Drake and Kendrick Lamar beef, Drake cloned the voices of Tupac Shakur and Snoop Dogg for his AI diss track Taylor Made Freestyle. The culture strongly opposed it,

"Any bum in the near future can just type something for a computer to say and make a hit. If we thought SoundCloud rap was bad, wait until AI rap is abundant.”

Reddit user UrinePulp sums up several key concerns outlined in this report. The line, “any bum can just type something for a computer to say,” outlines the point about AI eliminating the struggle narrative or the being bad to be good phase. Furthermore, the mention of embarrassment and concern about “the future of hip hop” tells us that people value the tradition of lyrical skill and authenticity in hip hop/rap. This is a cultural standard that AI-music intrinsically weakens by skirting pass genuine lived experience and artistic development. Many fans, like UrinePulp, viewed this stunt as creatively distasteful and as “cultural imperialism”. For the most part, fans viewed this as Drake improperly using someone else’s culture and insulting the legacy of Tupac and his backing of real social issues.

The Cultural Double Standard in AI Music

The same mental process that leads people to take rap lyrics at face value while viewing country lyrics as storytelling also influences how we evaluate AI-generated music. The Johnny Cash Bias is a phenomenon in which the same lyrical content is interpreted differently depending on the genre to which it is asociated. A 1999 and 2017 study revealed that people “deemed identical lyrics more literal, offensive, and in greater need of regulation when they were characterized as rap compared to country.” This is a clear indication that people do not judge AI-music impartially, or for what it truly is, technology. In reality, it is judged through cultural bias and the expectations we have for different genres of music. Not to mention, there is a clear cultural boundary between what is viewed as acceptable assistance and what is seen as a threat to entire livelihoods. A study produced by Ari’s Take found that 87 percent of producers already use AI tools in music creation. But only 13 percent use it to generate an entire track. This implies that, in the production kitchen, AI being used as a sous chef focused on audio, code, or video is acceptable. But not for the chef, or executive producer. Tracks that are completely produced by AI threaten the traditional understanding of who created the music and what gives it authenticity.

Why Capitalism Has Us All Defending "Real" Music

Economic forces play a powerful role in shaping what we believe to be real or valuable, even though most cultural beliefs are rooted in artistic value and emotional connection.

Scarcity vs unlimited supply: Before AI music was a thing, scarcity held a sense of value. Only those with the talent or resources could produce high-quality music. Now, AI is flooding the market, with around 10 percent of music creators using generative AI, directly endangering the competitive advantage held by established artists and the economic model built on exclusivity as a whole.

The analog comeback: vinyl, live shows, and slow listening. Right now, there is a counter-revolution against the physical as streaming platforms like Deezer report that over 50,000 entirely AI-generated tracks are being uploaded daily. Those who are tired of subscriptions are driving the comeback of cassettes, CDs, and vinyl, enabling music consumption in a more focused, meaningful way (by eliminating algorithmic playlists

The irreplaceable human experience of concerts: Even though AI is making it easier to produce recorded music, the culture overwhelmingly values live performances. Simply because they are authentic, irreplaceable experiences, this can be measured through:

The Weeknd’s: After Hours 'Til Dawn tour, grossing $1 billion,

Taylor Swift’s: The Eras Tour, grossing over $2 billion,

NBA YoungBoy’s: Make America Slime Again (MASA) Tour, grossing $70 million.

This proves that people will still pay a premium to see a live human performance, even as AI has made music algorithmically replicable. Moreover, live concerts are only becoming more popular and remain the best way for artists and fans to truly connect.

Why Our Beliefs About AI Music Actually Make Sense

If scientists could prove that our brains respond differently to human-made music vs. AI-generated music (even if they sound the same), this could explain why people prefer man-made music. But there is a slight problem with this theory: a Deezer study found that 97% of respondents failed a blind test trying to distinguish between fully AI-generated music and man-made music. Informing us that human ears can’t tell the difference between human and AI tracks, challenging the idea that it is innate for us to “feel” when music is authentic. Furthermore, the culture values music because of its emotion, human experience, and unique perspectives. This is because no artificial intelligence has ever lived in reality. It cannot genuinely express happiness, sadness, grief, or joy. AI can’t even have a discourse about social issues. Any emotion it shows is exactly what is known for being, artificial. However, if someone uses AI to express their true emotions, like using Grimes's AI voice to vocalize what they might be too scared to do themselves, does this make it real again? Does Grimes's ethical consent in cloning her voice for a royalty split culturally validate this move?

Moreover, we can argue that even with AI’s bad rep, the culture does accept and validate AI when it is used as a transparent, collaborative tool that expands human creativity and intention, rather than completely bypassing or replacing human artistry. This is most noticeable in Kendrick Lamar’s The Heart Part 5 music video. Here, Lamar used deepfake technology to morph into Black figures such as Kobe Bryant, Will Smith, Kanye West, and Nipsey Hussle. The culture loved this! Lauren London called it “powerful art.” Because Lamar first received the blessings of their estates and then used the AI tech to show radical empathy and collective trauma, revealing a deep understanding of what real pain looks like, the culture deemed it highly authentic.

Lastly, if AI effectively replaces human musicians and threatens to eliminate their entire existence, this will lead to a concentration of profits among tech companies rather than artists. There is a somewhat dual crisis threatening the livelihoods of these human creators. Online, the hasty approval of AI in music has created a new danger for human artists because thousands of synthetic tracks are uploaded daily, taking away from their share of royalty revenue. Offline, it is extremely difficult for independent venues to compete with unregulated ticketing platforms (Ticketmaster) and monopolies such as Live Nation.

This seems to raise the fundamental question of “what’s the point of music anyway?” Is it for aesthetic pleasure, human connection, communication, or all of the above? There is not a single correct answer to what counts as authentic or valuable music. The answer is negotiated among the culture by evolving creativity, psychological biases, economic interests, and by what is deemed ethical. We can see this through the Ghostwriter phenomenon, the Johnny Cash bias, and AI detection tools that don’t work properly: authenticity is a retroactive decision based on who made it, what genre it falls into, and how well it sold. At this very moment, we are in a transitional period in which people haven’t yet agreed on the rules, and cultural norms haven’t been established. Some artists are monetizing, being praised, and detested for using artificial intelligence in the music world, all while legal systems struggle to keep up. To make a fair judgment in today’s volatile music market, it seems as though the culture needs to determine what is AI-acceptable vs. human-artistic.

Updated: